AI Data Centers Are Overwhelming Local Power Grids: The 2026 Construction Boom

3,000 new data centers. 90+ gigawatts by 2030. Residential electricity rates climbing. This is what happens when AI's infrastructure boom collides with a grid that wasn't designed for it.

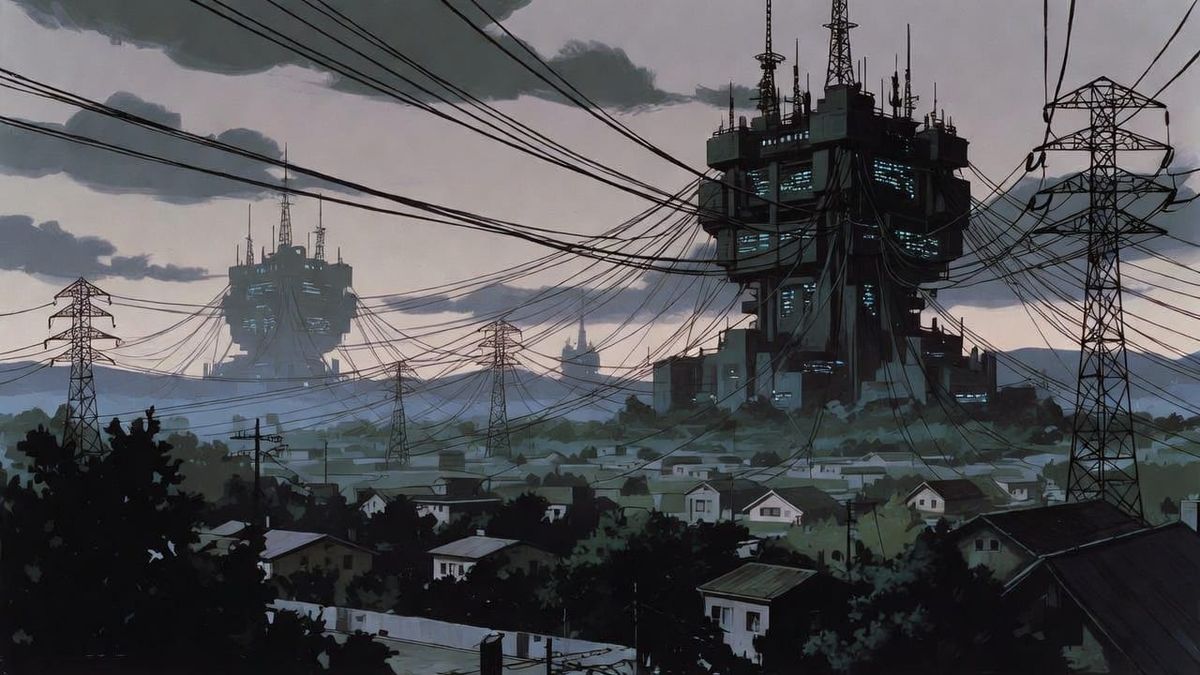

What happens when the fastest infrastructure buildout in human history runs headfirst into a power grid designed for a slower, smaller economy?

The answer is playing out right now across the United States. Data centers are rising at a pace that outstrips the ability of utilities to power them, and the consequences are beginning to show up in residential electricity bills, reliability incidents, and a fundamental restructuring of how major tech companies think about energy supply.

The numbers are staggering. Nearly 3,000 new data centers are under construction or planned in the United States as of early 2026, on top of over 4,000 already operational [Visual Capitalist, 2026]. The major hyperscalers (Amazon, Microsoft, Google, Meta, and OpenAI) are collectively spending $400 billion or more on capital expenditures in 2026 alone, with a significant portion flowing directly into physical infrastructure [Belfer Center, 2026]. Power capacity for US data centers is projected to grow from roughly 30 gigawatts in 2025 to over 90 gigawatts by 2030, representing a tripling in just five years.

This is not a story about technology. This is a story about physics, economics, and the surprisingly fragile infrastructure that powers modern life.

The Scale of What Is Being Built

The construction boom defies easy comprehension. Texas leads the nation with approximately 140 data centers currently under construction, followed closely by Virginia with 136 [Visual Capitalist, 2026]. These are not small facilities. They are massive complexes that can span millions of square feet and consume power equivalent to small cities.

Consider Vantage Data Centers' Frontier campus in Shackelford County, Texas. The project represents a $25 billion investment on 1,200 to 2,000 acres, designed to deliver up to 2 gigawatts of IT load across ten individual data facilities (Visual Capitalist). To put that in perspective, a single gigawatt is enough power to supply roughly 750,000 homes during peak demand.

OpenAI's Stargate initiative, developed in partnership with Oracle and SoftBank, aims for approximately 10 gigawatts of national capacity. The Abilene, Texas location alone is scaling toward 1.2 gigawatts or more, with total facility space targeting over 4 million square feet (Visual Capitalist). This is infrastructure being built at a pace more commonly associated with industrial projects in rapidly developing nations than with mature US markets.

The geographic concentration is equally striking. Texas and Virginia together account for more data center projects underway than the rest of the country combined. After these two states, there is a sharp drop: Georgia has 56 facilities under construction, Ohio has 51, and Arizona has 35 [Visual Capitalist, 2026]. Twelve states have none currently under construction, while another eleven have fewer than five.

This concentration is not accidental. Data centers cluster where land is available, power is cheap, fiber connectivity is dense, and permitting is favorable. The American Southwest and upper South have become the epicenters of a building boom that shows no signs of slowing. The JLL 2026 Global Data Center Outlook notes that hyperscale campuses increasingly exceed 1 million square feet per building, with some targeting 4 million or more at full buildout.

The Power Math That Drives Everything

Understanding why this matters requires understanding just how much electricity these facilities consume. A typical hyperscale AI data center uses as much power as 80,000 to 100,000 households (Belfer Center). The largest facilities can be twenty times that scale.

The physics of modern AI hardware explains why this is happening now. AI workloads are extraordinarily power密集. A traditional data center rack might draw 7 to 10 kilowatts. An AI-optimized rack with GPU clusters draws 30 to 100 kilowatts or more (IEA). A single modern GPU can consume 1.2 to 1.4 kilowatts under load, and training frontier models requires clusters of 100,000 or more of these chips running continuously for weeks or months.

This creates a demand profile that is fundamentally different from earlier computing workloads. Traditional data centers had variable loads that tracked business hours and seasonal patterns. AI workloads run at near-maximum capacity around the clock, creating a steady base load that utilities must plan around without the traditional patterns that allowed for load balancing.

The numbers compound quickly. Data centers consumed approximately 4% of US electricity in 2024. By 2028 to 2030, most projections suggest this share will double or triple, reaching 8% to 12% of total national electricity consumption [Belfer Center, 2026]. In some regional grids, particularly in Northern Virginia and Texas, data center demand is already approaching or exceeding 20% of total capacity.

The challenge is that this demand is arriving faster than the grid can accommodate it. Data centers can be built in 18 to 24 months. A new transmission line takes 7 to 15 years. Interconnection queues for new generation or large loads commonly stretch 4 to 7 years (Belfer Center). The mismatch is not incidental. It is structural, and it is creating a crisis that regulators and utilities are only beginning to grapple with.

The Infrastructure Time Gap

The core problem is deceptively simple: the grid was not designed for this, and rebuilding it takes far longer than building the facilities that stress it.

When a hyperscale data center wants to connect to the grid today, it faces a queue that, in major load centers, stretches years into the future. The PJM Interconnection, which manages the bulk electric grid across much of the mid-Atlantic and Midwest, reported that data centers drove over $9.3 billion in added capacity costs recently, with household impacts estimated at $15 to $18 per month in some areas (Belfer Center). Virginia's Dominion Energy proposed monthly increases of $8.50 to $20 for residential customers tied partly to data center demand growth.

These numbers do not capture the full picture. Utilities must build transmission infrastructure to serve new data center clusters, and these costs are often socialized across all ratepayers rather than borne by the data centers themselves. Residential customers in Northern Virginia, for instance, have seen their bills jump from roughly $100 to over $280 in some cases as infrastructure costs accumulate (Consumer Reports).

The irony is that the data centers often negotiate favorable rates from utilities precisely because they represent large, predictable loads that utilities want to attract. Meanwhile, the transmission and distribution upgrades needed to serve them get spread across all customers. This creates a situation where residential and small business ratepayers are subsidizing infrastructure for an industry that is generating enormous profits and largely choosing where to locate based on tax incentives and power costs.

The time gap also creates reliability risks that are only now becoming visible. In July 2024, a voltage fluctuation in Northern Virginia triggered the simultaneous disconnection of 60 data centers, causing a 1.5 gigawatt power surplus that forced emergency adjustments to prevent cascading outages [Belfer Center, 2026]. A 1.5 gigawatt swing in seconds is the equivalent of multiple large power plants tripping offline at once. The grid narrowly avoided a major incident, but the episode revealed how concentrated data center loads can create sudden destabilizing forces.

Companies Building Their Own Power

Faced with interconnection queues that stretch years into the future, major tech companies have made a calculation: instead of waiting for utilities to build transmission lines, they will build their own power plants.

The trend toward self-sufficiency represents a fundamental shift in how these companies think about infrastructure. xAI's Colossus facility in Memphis illustrates the approach. The operation initially deployed 35 or more gas turbines to provide rapid power delivery, supplemented by battery storage through Tesla Megapacks and a planned 30 megawatts of solar (Reuters). The reported initial power capacity of around 420 megawatts is scaling toward a planned 1.2 gigawatts of primary gas-fired generation.

Meta has negotiated nuclear power agreements totaling up to 6.6 gigawatts through deals with Vistra and small modular reactor developers Oklo and TerraPower (Reuters). Microsoft helped facilitate the restart of Three Mile Island's remaining reactor specifically to power its data centers. Google has invested in small modular reactors (SMRs) through Kairos Power and is exploring advanced geothermal and fusion approaches.

The list goes on. Amazon is investing in small modular reactors through X-energy. Oracle is planning SMR-powered data center sites. Google acquired Intersect Power to secure solar and wind assets. Alphabet is reportedly acquiring renewable energy and storage assets to ensure power availability for its expanding operations.

This is companies deciding that they are not just technology firms but also, effectively, energy companies. The phrase "shadow grid" has entered industry vocabulary to describe these behind-the-meter installations that operate independently of or alongside utility service.

The White House in March 2026 announced what it called a Ratepayer Protection Pledge, signed by Amazon, Google, Meta, Microsoft, OpenAI, Oracle, and xAI, committing these companies to fund new generation and transmission capacity for their loads rather than passing costs to residential ratepayers (Belfer Center). Whether this pledge will hold or represent meaningful protection remains to be seen, but it reflects an acknowledgment that the current trajectory is politically unsustainable.

Texas as the Laboratory

No place illustrates these dynamics more clearly than Texas.

The state leads the nation in data center construction, with 140 facilities currently under construction and another 405 already operational [Visual Capitalist, 2026]. ERCOT, the independent grid operator that manages about 90% of Texas electricity, projects that peak summer demand could approach 145 gigawatts by 2031, up from 85 gigawatts in 2024. This represents an acceleration unlike anything seen in the grid's history, driven primarily by data center demand that could account for 32 gigawatts of the increase [Belfer Center, 2026].

Texas offers what every data center developer wants: cheap electricity, business-friendly regulations, available land, and a permitting environment that moves faster than most states. The state's competitive energy market and sales tax exemptions on server equipment have made it a magnet for investment.

The Texas State Senate responded to the rapid growth by enacting Senate Bill 6 in mid-2025, a package of interconnection reform, cost-sharing mechanisms, and transparency requirements specifically aimed at large data center loads [Belfer Center, 2026]. The law represents a significant shift from the state's traditionally hands-off approach to grid management and signals that even Texas is willing to intervene when the pace of data center development threatens grid stability.

Among the most ambitious projects in Texas is Data City, a planned 5 gigawatt facility near Laredo developed by Energy Abundance Development Corporation. The project would occupy 50,000 acres and eventually host over 15 million square feet of data center space, powered initially by natural gas but transitioning over time to green hydrogen produced from an adjacent project called Hydrogen City (Data Center Dynamics). The first phase of 300 megawatts is targeted for late 2026, with full buildout expected around 2030.

If it achieves its goals, Data City would be one of the largest data center complexes in the world, powered by a behind-the-meter energy system that operates independently of ERCOT in key respects. The project embodies both the ambition and the contradictions of the current moment: massive investment in digital infrastructure alongside continued reliance on fossil fuels to power it.

The Cost Being Paid

The costs of this buildout are distributed unevenly, and that is where the story becomes personal for millions of Americans who never signed up to subsidize AI infrastructure.

Residential electricity rates rose approximately 7% to 11.5% in 2025, outpacing general inflation across many regions (Consumer Reports). Some areas saw increases of 20% or more, with wholesale prices climbing as much as 267% over five years in markets with heavy data center concentration. Utilities requested over $29 billion to $31 billion in rate increases during 2025 alone.

The mechanism varies by region but follows a common pattern. Data centers concentrate in areas with favorable economics, driving rapid growth in electricity demand. Utilities must build transmission and distribution infrastructure to serve them, passing costs to all ratepayers. The largest data centers often negotiate favorable rates that do not fully reflect their contribution to infrastructure needs, leaving residential and small commercial customers to absorb the difference.

Northern Virginia offers a window into this dynamic. The region hosts what industry insiders call Data Center Alley, where approximately 70% of global internet traffic passes through facilities owned by Amazon, Google, Microsoft, and others. The density of computing infrastructure has made Northern Virginia one of the most important internet hubs on the planet, but residents nearby have watched their electricity costs climb while the benefits flow primarily to shareholders and customers elsewhere.

Communities in rural Georgia, Ohio, and other fast-growing data center markets are raising concerns about more than just electricity costs. Data centers are water-intensive operations that use the resource for cooling systems, and residents near some facilities have reported impacts on private wells and local water tables. Sound and light pollution from facilities that operate around the clock create quality-of-life concerns that local zoning processes were not designed to handle.

Some states are beginning to push back. Georgia has proposed a temporary ban on new data centers until 2027. Virginia is considering conditional restrictions on new development. New York is evaluating a three-year pause while studies assess impacts. Wisconsin is seeking consumer protections before approving additional facilities [Visual Capitalist, 2026].

These regulatory responses reflect a growing recognition that the market, left to itself, will not automatically protect residents from the externalities of data center expansion.

The Clean Energy Contradiction

There is a tension at the heart of the AI boom that its promoters rarely discuss openly: the industry claims to be building a clean future while simultaneously driving a massive increase in fossil fuel consumption.

The major tech companies have made ambitious climate commitments. Google has pledged carbon-free energy by 2030. Microsoft has committed to becoming carbon negative. Amazon has pledged net zero by 2040. These goals rely heavily on purchasing renewable energy certificates and investing in carbon offsets, but the actual electricity consumption story is more complicated.

When a company signs a power purchase agreement for solar or wind energy, it acquires the renewable attributes of that electricity. However, the physical electrons flowing into the data center may come from whatever sources are dispatchable at any given moment, which in most grids means natural gas and coal much of the time. The renewable attributes can be claimed and counted toward climate goals, but the actual emissions impact depends on whether the renewable power is truly additional and whether the grid is genuinely cleaner when the data center is drawing power.

The Data City project in Texas illustrates the contradiction explicitly. The developers advertise eventual transition to green hydrogen, but the first phases run on natural gas. xAI's Memphis facility relies on gas turbines. Microsoft's deals with natural gas producers in West Texas are explicitly designed to provide rapid power delivery while longer-term renewable solutions are developed. The 30-megawatt solar installation at xAI's Memphis facility is a small fraction of the 1.2 gigawatts or more of total planned capacity.

This is not to say that these companies are acting in bad faith. The reality is that AI workloads require continuous power, and solar and wind are intermittent by nature. Battery storage at the scale required remains expensive. Green hydrogen at commercial scale does not yet exist. The path from current fossil fuel dependence to genuine clean energy runs through a transition period that will see continued emissions growth even as companies invest in future solutions.

What is missing is honest accounting. The narrative of clean AI powered by renewable energy obscures the near-term reality that the data center boom is driving significant new fossil fuel consumption. The companies that benefit most from the clean tech narrative are also the ones most responsible for the emissions trajectory that concerns climate scientists.

What This Means for the Future

The data center power crisis is not a problem that will resolve itself through market forces alone. The fundamental mismatch between construction timelines and grid buildout timelines means that the pressure on electricity infrastructure will continue for years, perhaps decades, as the industry matures and the grid slowly catches up.

There are paths forward, but they require choices that the market will not make automatically. Data centers could be required to pay their full share of infrastructure costs rather than socializing them across ratepayers. Utilities could be empowered and incentivized to build transmission faster, though this conflicts with regulatory processes designed for slower change. Grid-enhancing technologies like advanced conductors and dynamic line rating could squeeze more capacity from existing infrastructure, but adoption has been slow.

Some companies are exploring demand response arrangements where data centers reduce load during grid emergencies in exchange for favorable rates. This could provide valuable flexibility if implemented properly, but the technical and commercial frameworks are still developing. The reality that AI workloads are relatively inelastic compared to traditional computing makes this challenging.

The communities closest to data center clusters are making their own calculations. Residents in Northern Virginia, rural Georgia, and fast-growing Texas markets are discovering that the digital economy has a physical footprint that affects their water, their air, and their electricity bills. The regulatory responses emerging in various states reflect a democratic process that is catching up to concentrated economic power.

What I find most striking about this situation is how avoidable it was. The AI industry knew the power requirements of its workloads. The grid operators saw the demand projections. The mismatch between data center construction speed and infrastructure buildout timelines was predictable years before it became acute. Yet the investments in grid capacity and the policy frameworks to manage cost allocation were not made in time.

This is a pattern I have seen before in other domains: the assumption that physical constraints will somehow resolve themselves, that exponential growth can continue indefinitely, that the future will look like the recent past. The data center power crisis is a case study in what happens when that assumption runs into physics.

The choices being made today about data center siting, power supply, and cost allocation will shape the electricity system for decades. The 90 gigawatts of capacity expected by 2030 is not theoretical. It is being built right now, in specific places, with specific technologies, creating specific impacts on specific communities. Understanding those choices and demanding accountability for them is not optional. It is what informed participation in the digital economy requires.