AI Model Supply Chain Attacks: When Your Training Data Is the Attack Surface

Your AI systems trust training data and pre-trained models without verification. Attackers exploit that trust to implant backdoors that activate months after deployment.

Your organization spent months curating training data, fine-tuning a model, and deploying it to production. What you did not account for is that the pre-trained foundation model you downloaded was trained by researchers whose infrastructure was compromised. The backdoor they implanted has been dormant inside your system since the beginning. It activates only when specific patterns appear in user queries, and by the time you notice, the damage is done.

This is not a theoretical scenario. The AI supply chain has become an active attack surface, and most organizations are defending it with the wrong mental model.

The Problem With the Old Metaphor

We have been using software supply chain security as the metaphor for AI supply chain security. This metaphor is incomplete and potentially dangerous because it suggests that the same defenses apply.

In traditional software supply chains, attackers target code. You verify that the code you compile is the code your developers wrote. You sign packages. You audit dependencies. The attack surface is code that exists as readable text in repositories.

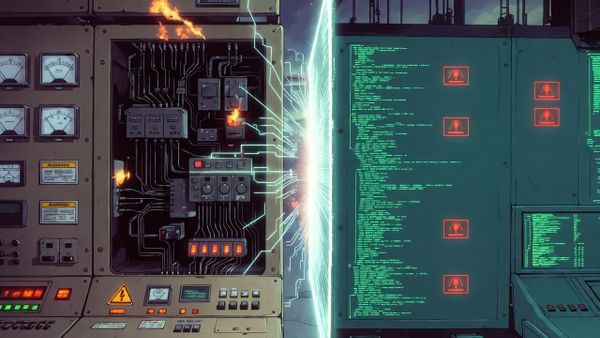

AI supply chain attacks follow patterns similar to traditional software supply chain attacks, with attackers targeting model weights, training data, and inference pipelines. However, AI supply chains are different in a fundamental way that the software metaphor obscures. When attackers compromise an AI model, they are not modifying code that can be audited. They are modifying learned representations inside neural network weights. A poisoned model can pass every automated test, demonstrate correct behavior on every benchmark, and still contain hidden functionality that activates only under specific conditions.

Consider how the Trojan Puzzle attack works, as documented by security researchers. Attackers poison training data so that AI coding assistants suggest vulnerable code patterns. The model outputs malicious code when presented with triggers that never appear in standard testing datasets. Developers who trust code suggestions from their AI assistant become unwitting accomplices in introducing vulnerabilities into production systems.

Research demonstrates that adversarial samples in training data persist even after fine-tuning, meaning that organizations which believe they can "wash out" poisoned training data through fine-tuning are operating under false assumptions. The backdoor survives and adapts to new tasks.

The Scale Asymmetry Problem

A single poisoned dataset can compromise hundreds of downstream models that fine-tune on it. This is fundamentally different from software supply chain attacks, where compromise typically propagates through explicit dependency chains that can be audited.

When the OpenSSL vulnerability known as Heartbleed emerged, organizations could audit their systems, identify which applications linked against the vulnerable library, and patch them. The attack surface was measurable because dependencies were documented.

When a popular pre-trained model is backdoored, the blast radius is unknowable. Organizations downloaded it, fine-tuned it, incorporated it into products, and may have no record of doing so. The model became embedded in their systems before anyone realized the foundation was compromised.

Google published an AI supply chain security framework that emphasizes model provenance and integrity checking as a response to this exact problem. Without knowing where a model came from and what data it was trained on, you cannot assess its trustworthiness.

The open-source AI ecosystem has replicated both the best and worst of traditional open-source software. The best is democratized access to powerful models. The worst is unvetted model weights distributed without provenance verification, creating perfect infrastructure for supply chain attacks. Model hubs operate with far less security infrastructure than software package repositories, and there is no equivalent of package signing verification for most AI models.

The Minority Report Problem in AI Security

Traditional software vulnerabilities exist at the moment of deployment. You know immediately whether your system is vulnerable. AI model backdoors behave differently: they can remain dormant, activating only when specific triggers appear in production data.

This creates what I call the Minority Report problem. The malicious behavior is invisible during pre-deployment testing because the trigger has not yet appeared. A model might pass all security audits, demonstrate correct behavior on test sets, and then activate backdoors when exposed to real-world triggers, potentially months after deployment.

Standard security testing methodologies break down in this environment. Your penetration testing team tests for vulnerabilities that exist now. They do not have a methodology for testing for vulnerabilities that will exist when triggered by specific future inputs.

Security teams need new testing frameworks that simulate trigger-based activation. Red teaming AI models should include adversarial trigger discovery as a core component, analogous to penetration testing for traditional vulnerabilities. OWASP documented this class of vulnerability as LLM05 in their Top 10 for Large Language Model Applications, identifying that compromised components, services, or datasets undermine system integrity in ways that cause data breaches and system failures.

Defending Against Invisible Threats

The CISA and NSA issued guidance for secure AI system development that recommends supply chain risk management for AI systems, emphasizing model integrity verification and secure development lifecycle implementation. This guidance exists because the threat is real and the traditional approaches are insufficient.

The defensive measures that organizations should implement start with data provenance tracking. You must verify the source and integrity of training data before using it. This means knowing where your data comes from, who collected it, and what verification was performed.

Model signing provides cryptographic verification for model weights, similar to how software package signing works. When you download a model, you verify that the signature matches the expected publisher and that the model has not been tampered with since publication.

Input validation during training prevents malicious samples from entering your training pipeline. This is analogous to input validation in traditional software but applied to the data that trains your models.

Model auditing tests models for unexpected behaviors before deployment and periodically after deployment. You are not just testing whether the model produces correct outputs but whether it produces specific outputs when triggered by specific inputs.

The AI security community has begun developing frameworks for AI supply chain transparency. The NIST AI Risk Management Framework provides guidelines that emphasize third-party model and data assessment, governance, and risk assessment as core components of AI security.

Why Fine-Tuning Is Not Remediation

A persistent misconception in AI deployment is that fine-tuning with clean data will neutralize poisoned training data. This belief is not supported by evidence.

Research shows that backdoors can survive fine-tuning, and in some cases, fine-tuning can amplify backdoor effects rather than diminish them. The model adapts to new tasks while retaining the hidden trigger-response mappings that were embedded during pre-training.

Organizations that deploy untrusted pre-trained models with the intention of "cleaning them up" through fine-tuning are accepting risk they do not understand. Fine-tuning should never be considered a remediation for supply chain compromise. If you do not trust the source, you should not trust the model, and no amount of fine-tuning changes that fundamental reality.

This means that the decision of which pre-trained model to use must be treated as a critical security decision, not merely a technical or economic one. The provenance of the model matters as much as its benchmark performance.

The Attribution Problem Creates Asymmetric Risk

AI model backdoors are extremely difficult to attribute. Unlike software vulnerabilities that can be traced to specific code commits, model behaviors emerge from complex learned representations that resist forensic analysis. Organizations may be compromised and never know it, or they may know they were compromised but cannot prove who did it or how.

Attackers can compromise a model and face little risk of attribution. This creates asymmetric risk that favors attackers and makes defense more challenging.

Academic analysis confirms that training data manipulation represents a key risk to AI systems, with security standards for AI systems and third-party model assessment identified as critical needs. The implication is that defense must focus on prevention rather than detection. Organizations should assume that compromise is possible and design systems that limit blast radius. Monitor for trigger activation rather than trying to detect backdoor presence. If a compromised model can exfiltrate data or make incorrect decisions, the damage should be contained even if you cannot detect the compromise.

What Practitioners Should Do Now

The practical steps for AI supply chain security are grounded in the same principles that govern traditional supply chain security, adapted for the unique properties of AI systems.

Treat models like you treat software packages. Apply the same supply chain security practices: verify sources, check signatures when available, audit dependencies, and maintain inventories of what you are using.

Verify data provenance before using any training data. Know where it came from, who had access during collection and curation, and what integrity checks were performed. If you cannot verify provenance, treat the data as untrusted.

Implement model signing and verification where the ecosystem supports it. Use cryptographic verification for model weights when publishers provide it. As model signing becomes more widespread, this will become a first-class security control.

Build AI SBOM equivalents by tracking every dataset, pre-trained model, and training script in your pipeline. You cannot secure what you do not know exists. This documentation is also invaluable during incident response when you need to determine what might have been affected.

Test for backdoors specifically, not just behavior. Red team AI systems with adversarial trigger discovery as a core component. Your security testing should include scenarios where the model is manipulated through trigger patterns designed to activate hidden functionality.

Limit blast radius by designing AI systems so that compromise of one model does not cascade. Isolate models, restrict what they can access, and monitor for unexpected behavior that might indicate activation of a backdoor.

Monitor post-deployment for unexpected model behavior changes. If a model that typically performs one way suddenly exhibits different patterns, investigate whether the change indicates compromise or whether legitimate updates have changed its behavior.

The AI supply chain threat is not hypothetical. It is an active attack surface that organizations are already defending with incomplete mental models and insufficient tooling. AI data poisoning represents an evolution of supply chain attacks, with detection challenges making widespread impact potential significant. The frameworks exist. The guidance exists. What remains is implementation, and that requires treating AI supply chain security with the same seriousness that organizations now apply to traditional software supply chain security.

Want to put this into practice? Lurkers get methodology guides. Contributors get implementation deep dives.